Methodology for Past Forecast Review

I downloaded a CSV file with all of my tweets and searched for all tweets with the strings forecast*, predict*, extrapolat*, state of the art*, SOTA*, and expect*. This may have missed a few predictions, and there are some forecasts that I’ve made in places other than Twitter, but this method has probably covered the vast majority of predictions, as I’m pretty tweet-prone.

It turns out that there were a lot more than I thought (I forgot about a lot of the less rigorous ones), and the forecasts have different implicit (and sometimes explicit) confidence levels and focuses (e.g. quantifiable technical achievements vs. social adoption of/responses to AI).

For each of the forecasts below, which are arranged in chronological order, I’ll reproduce the text of the tweet, and then say something about how it fared. I didn’t reproduce every single forecast-y tweet here because some are extremely vague or otherwise uninteresting, but here is a link to the spreadsheet on which this blog post was based if you’re interested/want to check (and my entire tweet history if you’re super skeptical about data missing from that curated spreadsheet).

Annotated List of Forecasts

I expected that CMU, a NASA-related team, and the Institute for Human and Machine Cognition (IHMC) would do well in the first (virtual) round of the DARPA Robotics Challenge:

Looking forward to the first DARPA Robotics Challenge results on Thursday. My bet is CMU, one of the NASA-related teams, and IHMC do well.

— Miles Brundage (@Miles_Brundage) June 26, 2013

I was two out of three with my DARPA Robotics Challenge predictions...IHMC did the best - not surprised.

— Miles Brundage (@Miles_Brundage) June 27, 2013

Later, I doubled down on this IHMC-boosterism:

If you're in Florida, consider checking out the DARPA Robotics Challenge live on Dec. 20-21 http://t.co/L41D4Wi5A4 My money is on IHMC!

— Miles Brundage (@Miles_Brundage) December 9, 2013

DARPA Robotics Challenge is Friday and Saturday! My bet is still on IHMC. Anyone else have a favorite?

— Miles Brundage (@Miles_Brundage) December 19, 2013

So now that Google-SCHAFT is out of the DRC, my prediction of IHMC doing well has retroactively improved, they won rounds 1 and 2! ;-)

— Miles Brundage (@Miles_Brundage) June 26, 2014

In early 2015, I said some things about DeepMind's likely work in 2015:

In 2015 I think DeepMind will prob demo some sort of mind blowing learning thing in a 3D world or at least much-richer-than-Atari 2D world.

— Miles Brundage (@Miles_Brundage) January 1, 2015

On error bars: wouldn't be stunned if what I said re: DeepMind demo happened in 2016 not 2015, but if not in 2016 then my model is v. wrong.

— Miles Brundage (@Miles_Brundage) January 1, 2015

Mode prediction for where in videogame chronology/complexity space DeepMind will have impressively dominated many hard games in 2016 is 2000

— Miles Brundage (@Miles_Brundage) January 1, 2015

DeepMind *could* focus on playing higher fraction of old games w/o input, but they're also simultaneously moving forward in time game-wise.

— Miles Brundage (@Miles_Brundage) January 6, 2015

DeepMind will prob someday (if they haven't already) do non-game stuff, but for now that's their metric, with some reason - it's very hard!

— Miles Brundage (@Miles_Brundage) January 1, 2015

Anti-prediction for DeepMind 2015-2016: them playing Destiny or other current video game. Way too hard/not worth their time except for fun.

— Miles Brundage (@Miles_Brundage) January 6, 2015

Another key pt on DeepMind's near-term game stuff: suspect some of the impressive results they show will *not* be fully autonomous learners.

— Miles Brundage (@Miles_Brundage) January 6, 2015

Elaboration on previous 2015-2016 DeepMind predictions: simultaneous to video game stuff, they will prob make some big progress on Go. (1/2)

— Miles Brundage (@Miles_Brundage) January 6, 2015

My money is on IHMC doing well in, if not winning, DARPA Robotics Challenge finals. Will be v. interesting to see how the Chinese team does.

— Miles Brundage (@Miles_Brundage) March 21, 2015

Regarding speech recognition, in late 2015, i said:

2. Think 2016 will be year in which it's pretty clear that speech recognition is now of broad utility. Also, note role hardware played in...

— Miles Brundage (@Miles_Brundage) December 17, 2015

As part of a longer rant in 2016, I said:

6. And I no longer think massive progress in AI in, say, 10 years is implausible - now seems plausible enough to plan for possibility of it.

— Miles Brundage (@Miles_Brundage) January 8, 2016

8. I expect enough prog that "human-level AI" will be more clearly revealed as a problematic threshold, and in many domains, long surpassed.

— Miles Brundage (@Miles_Brundage) January 8, 2016

10. access to the Internet is allowed, a la https://t.co/Vs9KX98v3p

— Miles Brundage (@Miles_Brundage) January 8, 2016

Regarding hardware and neural network training speeds, I said:

2. This would affect, as prior hardware improvements have affected, three things: attainable performance, speed thereof, and iteration pace.

— Miles Brundage (@Miles_Brundage) January 15, 2016

3. And that's all just from hardware - algorithmic advances have also been rapid in recent years, though I haven't yet quantified that rate.

— Miles Brundage (@Miles_Brundage) January 15, 2016

4. Seems like a not too crazy projection is that in, say, 3 years, neural nets will be 100x faster to train, w/ big impacts on applications.

— Miles Brundage (@Miles_Brundage) January 15, 2016

6. These are just rough ideas currently - may do more rigorous calculation with error bars at some point. Point is, expect much NN progress.

— Miles Brundage (@Miles_Brundage) January 15, 2016

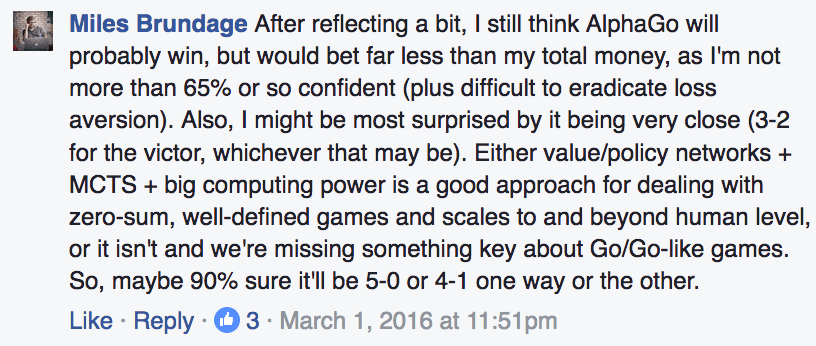

Regarding AlphaGo’s success against Lee Sedol, I said in the middle of the match:

Predicted AlphaGo victory w/ 65% confidence and 4-1/5-0 for whichever victor w/ 90% confidence, so not too late for me to be very wrong.. :)

— Miles Brundage (@Miles_Brundage) March 12, 2016

Regarding dialogue systems:

In few years, I expect impressive (by today's standards, though maybe not future revised ones) limited dialogue AIs from Goog, IBM, FB, etc.

— Miles Brundage (@Miles_Brundage) March 12, 2016

Regarding Google’s business model for AI:

@samim my expectation is that they will gradually, over next 10 years, introduce more, better, and more integrated cognition-as-a-service.

— Miles Brundage (@Miles_Brundage) March 24, 2016

Atari Forecasts

See main blog post.

RSS Feed

RSS Feed